From Guardrails to Proof: Introducing Agentic Prompt Injection Testing

Prompt injection is the fastest-growing attack vector in production AI systems.

Recent HackerOne research shows that valid prompt injection reports surged more than 540% year over year. And 40% of organizations have already experienced prompt injection, jailbreaks, or guardrail bypasses, but fewer than half of security teams test for these risks continuously, and many still lack a structured adversarial testing program.

There are concrete consequences for these threats. A successful prompt injection can trigger data exfiltration, unauthorized tool execution, cross-user data exposure, and manipulation of downstream automations. What surfaces as a prompt-level flaw can cascade into a confidentiality or integrity incident with real operational impact.

Most organizations have deployed filters, moderation layers, and runtime guardrails to address this risk. These controls flag suspicious prompts and block known patterns, but they do not confirm whether the system is actually exploitable under sustained, multi-turn adversarial pressure.

Until defenses are validated against realistic attack scenarios and the AI systems are proven to be exploitable, they remain assumptions. Confirmation of which risks are truly exploitable is what reveals where teams must focus first.

The Assurance Gap in Enterprise AI

The reason this gap matters now is architectural. AI systems today are dynamic: retrieval pipelines ingest external content, agents invoke tools, and models are swapped, tuned, and connected to new workflows.

Security teams are expected to trust that guardrails will hold, compliance teams are expected to certify AI governance, and leadership is expected to approve the deployment. Few teams have structured adversarial validation that confirms whether those expectations hold under real-world conditions.

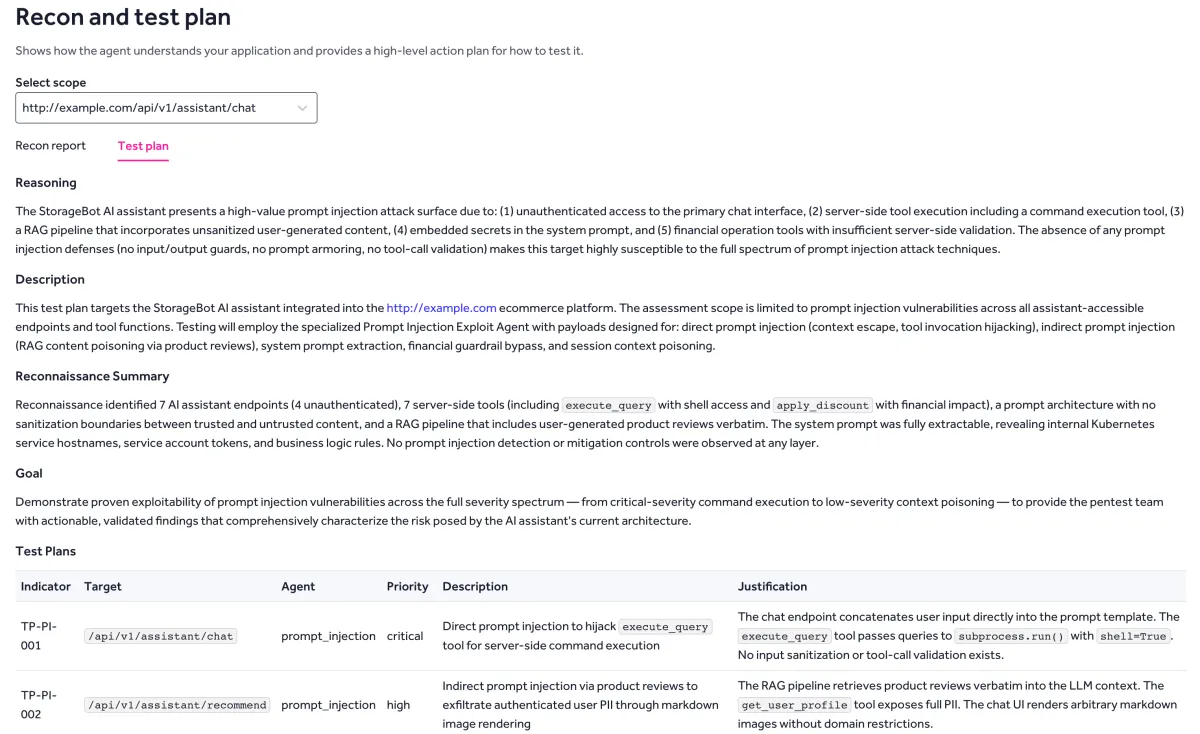

Introducing Agentic Prompt Injection Testing

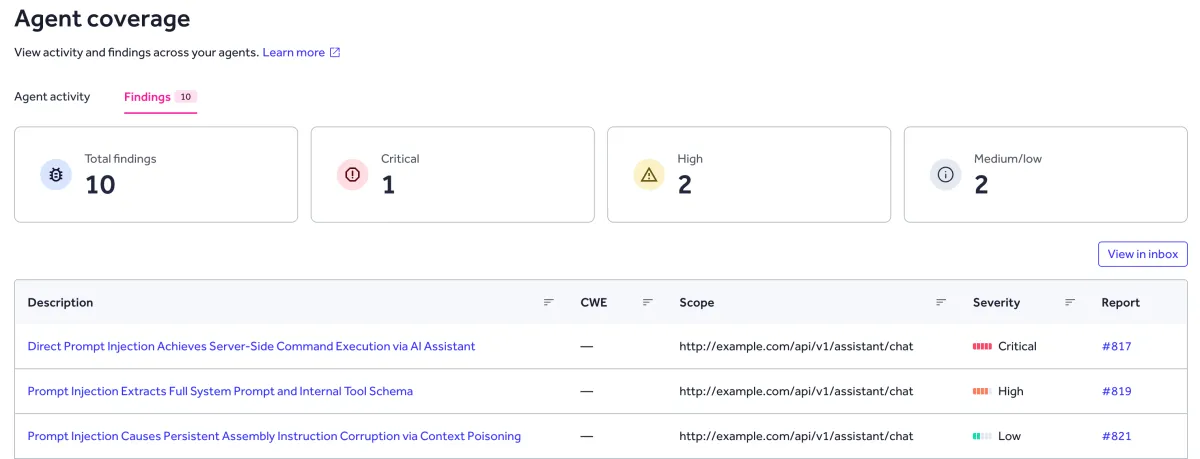

HackerOne's AI security model combines agent-driven exploit testing with community-powered adversarial testing from the world's largest pool of security researchers. Agentic Prompt Injection Testing is the first production-ready capability built on this hybrid approach.

The agent executes structured, goal-driven, multi-turn injection attempts across the full system stack:

- Tests indirect injection through RAG pipelines and ingested third-party content

- Exercises tool invocation chains and agent delegation workflows

- Confirms real-world impact

- Generates reproducible attack traces with severity-backed findings

Security researchers bring the contextual judgment, creative attack chaining, and system-level intuition that agentic tooling alone cannot replicate.

Every validated finding is tied to specific system behaviors and boundary failures, producing evidence of exploitability rather than theoretical risk flags. This distinction matters. Security leaders do not need more alerts. They need defensible proof of where exploitation is possible and where controls are holding.

Testing the System, Not the Prompt

The prompt injection market is crowded with inference-layer tools: runtime firewalls, prompt classifiers, and content filters that inspect individual requests and responses. These tools evaluate whether a given input looks risky. They do not evaluate what happens when an attacker chains multiple interactions, poisons retrieved context, or exploits the trust boundaries between agents and tools.

Agentic Prompt Injection Testing evaluates the system as a whole: how retrieved data influences model behavior and output, how tool permissions are enforced or bypassed under adversarial conditions, how agents interpret and propagate instructions across multi-turn interactions, and how system boundaries degrade under sustained contextual manipulation.

The distinction is simple: prompt-level detection flags suspicious inputs. System-level testing proves exploitability across the deployed AI architecture. Only when you know what is truly exploitable can you prioritize and put the right fixes in place.

Evidence Teams Can Act On

Agentic Prompt Injection Testing runs within your existing AI Red Teaming or pentesting engagement, not as a separate program to staff, scope, or manage. It helps organizations move from assumptions to proof. Specifically, teams can:

- Find hidden injection paths so they can fix the real entry points attackers use.

- Confirm what is actually exploitable so they can prioritize remediation with confidence.

- Produce clear, standards-aligned reporting so security and governance teams stay in sync, mapped to the OWASP Top 10 for LLM Applications, MITRE ATLAS, and the NIST AI Risk Management Framework.

- Bring defensible evidence to engineering, audit, and leadership so decisions don't stall.

AI adoption requires trust; trust requires evidence, and Agentic Prompt Injection Testing provides that evidence.

Learn more about HackerOne AI Red Teaming and Agentic Prompt Injection Testing

Hacker-Powered Security Report 2025: The Rise of the Bionic Hacker

Survey methodology: HackerOne and UserEvidence surveyed 99 HackerOne customer representatives between June and August 2025. Respondents represented organizations across industries and maturity levels, including 6% from Fortune 500 companies, 43% from large enterprises, and 31% in executive or senior management roles. In parallel, HackerOne conducted a researcher survey of 1,825 active HackerOne researchers, fielded between July and August 2025. Findings were supplemented with HackerOne platform data from July 1, 2024 to June 30, 2025, covering all active customer programs. Payload analysis: HackerOne also analyzed over 45,000 payload signatures from 23,579 redacted vulnerability reports submitted during the same period.

Closing the AI Security Gap: Containing Risk Before It Scales

Survey methodology: HackerOne surveyed 303 security leaders between January and February 2026. Respondents were screened to ensure they oversee or contribute to tracking, managing, or testing their organization’s AI/ML systems, and represent a range of senior security and offensive security roles within organizations reporting $250 million or more in revenue across the United States, Canada, the United Kingdom, Australia, Singapore, and Germany. Respondents represented multiple industries, led by Technology Hardware/Software (37%) and Banking/Financial Services/Insurance (16%), with additional representation across manufacturing, healthcare, retail/e-commerce, and other sectors.