Research Report

The Expanding AI Attack Surface

Closing Risk Gaps Through Continuous Security

In the report

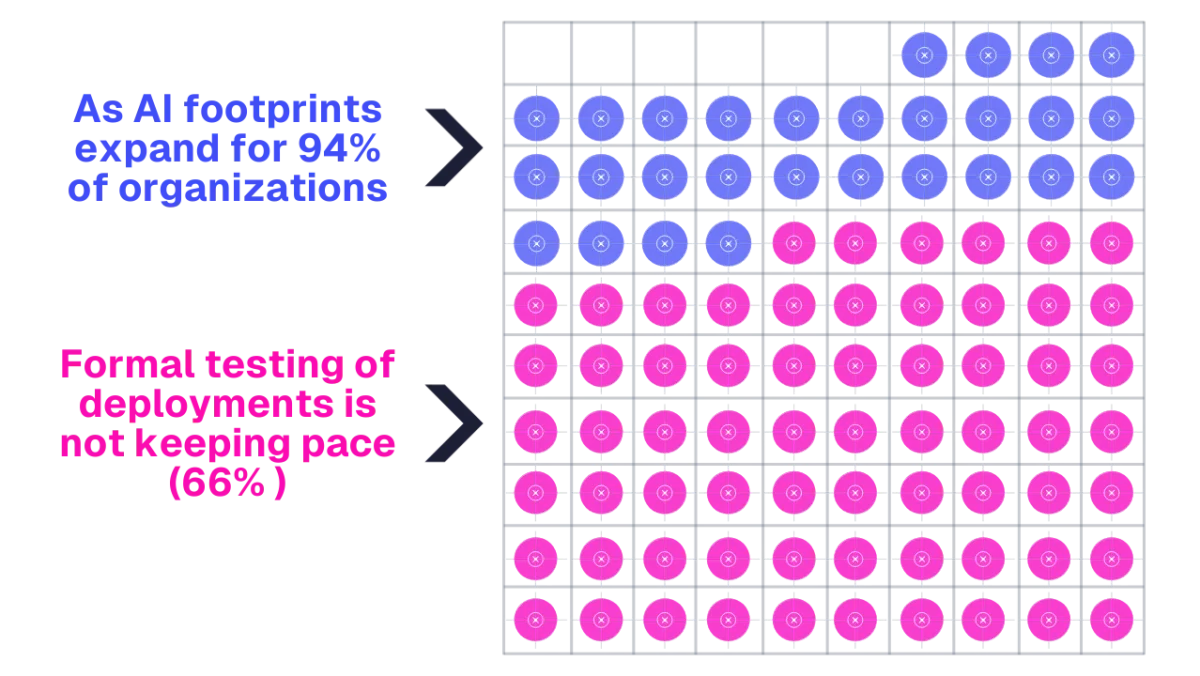

- A clear view of the AI Security Coverage Gap, when organizations test 60% or less of their AI footprint.

- Evidence that closing the gap improves outcomes: organizations testing most systems are 16% less likely to report an AI-related attack or vulnerability.

- A practical way to think about AI security testing maturity: coverage vs breadth, and why “just more testing” isn’t the answer.

- A breakdown of common AI security testing methods and when to use them to improve visibility and reduce blind spots.

- Guidance on where testing is often uneven, and why risk grows fastest where models meet real systems.