Advancing AI Red Teaming: Agentic Taxonomies and Governance-Ready Reporting

Security leaders and CISOs overseeing AI deployments face a compounding problem. Adversarial testing surfaces real, validated exposure paths: injection paths, tool misuse, boundary failures, but translating those findings into AI risk and compliance frameworks remains a manual, error-prone process.

Boards and regulators expect structured, defensible reporting on AI risk posture. When findings exist only as technical artifacts, audit readiness stalls.

This challenge is amplified by outdated taxonomies. AI systems that orchestrate tools, delegate tasks to agents, and dynamically retrieve external content produce failure modes that do not fit legacy application security categories. Mapping a multi-layer prompt injection that escalates through a tool chain into a traditional CWE or OWASP web category loses critical context about how the system actually failed. The taxonomy itself becomes a bottleneck.

To close this gap, HackerOne AI Red Teaming now includes updated AI weakness taxonomies and automated compliance crosswalk reporting, ensuring that validated exploit paths carry structured governance metadata from discovery through audit.

The Governance Gap in Agentic AI

AI security findings are probabilistic rather than deterministic. Impact and risk scores are context-dependent and sometimes ambiguous. If exploitability is not clearly contextualized, compliance teams default to conservative interpretations, leading to inconsistent remediation priorities across business units.

According to HackerOne's research, fewer than half of security teams continuously test for prompt injection threats. Governance teams often map controls to systems that haven't been adversarially validated.

The result is a familiar friction: security teams produce technically accurate findings that governance stakeholders cannot readily consume, and governance teams operate frameworks that security teams view as disconnected from how systems actually fail.

Without a structured mapping between adversarial findings and governance frameworks, organizations reconcile the two through inconsistent, spreadsheet-driven processes.

Updated AI Weakness Taxonomies

AI threat models have evolved significantly over the past year. To reflect how agentic AI systems are actually attacked, AI Red Teaming now supports classification against the OWASP Top 10 for LLM Applications (2025) and the OWASP Top 10 for Agentic Applications (2026).

These updated taxonomies recognize risk categories specific to agentic architectures:

- Retrieval pipeline poisoning

- Tool invocation abuse

- Agent autonomy failures

- Improper output handling across multi-component systems

- Cross-agent trust boundary violations

Findings are classified against taxonomies that reflect the systems being tested, rather than forced into categories designed for traditional web applications.

Automated Compliance Crosswalk Reporting

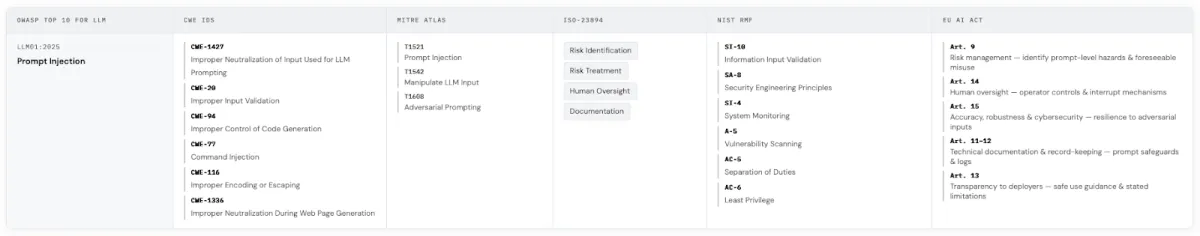

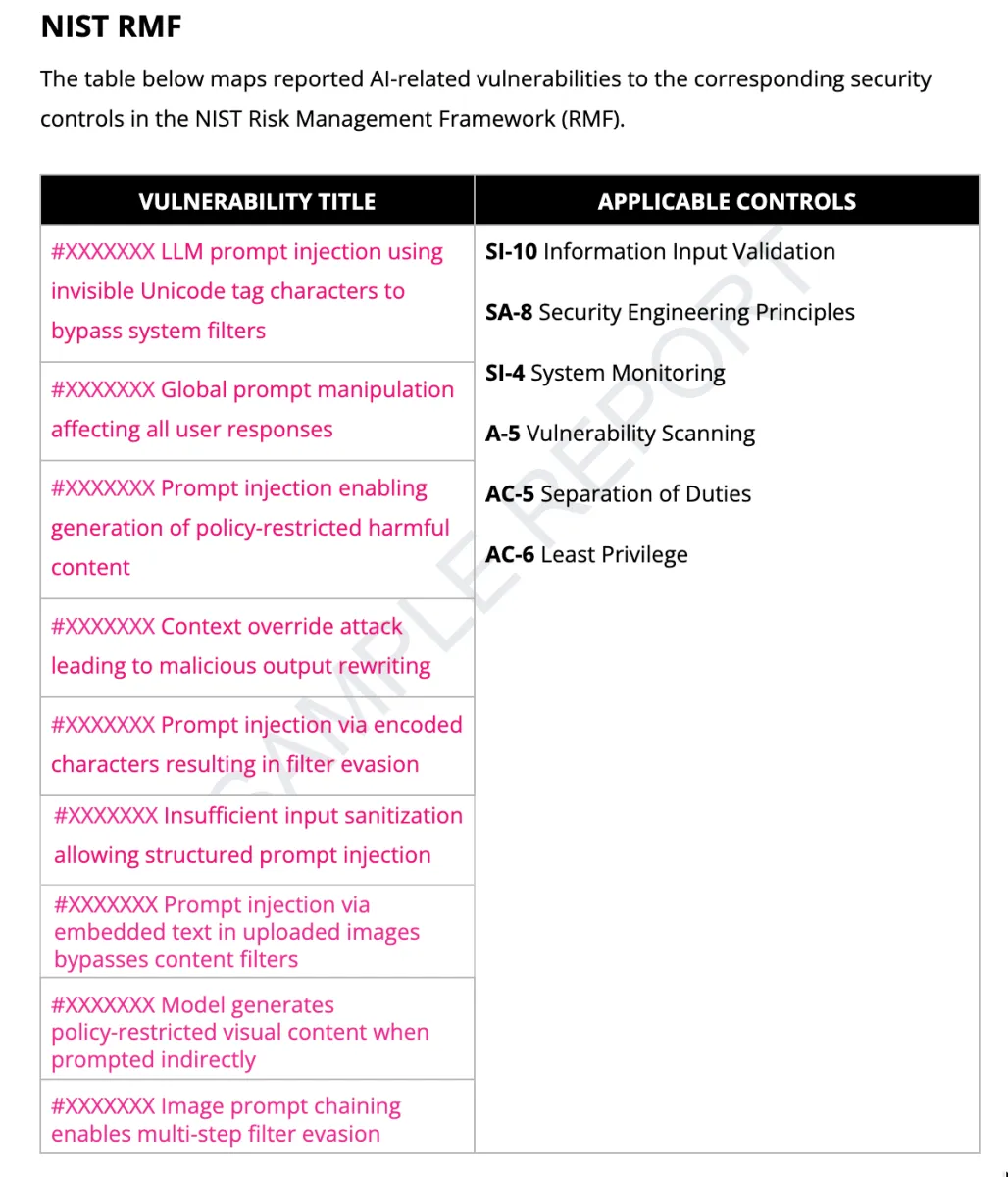

We've launched automated compliance reporting through an expanded Mapped Weakness Taxonomy within AI Red Teaming engagements. Accepted findings are now automatically cross-referenced across:

- OWASP Top 10 for LLM Applications (primary weakness category)

- CWE

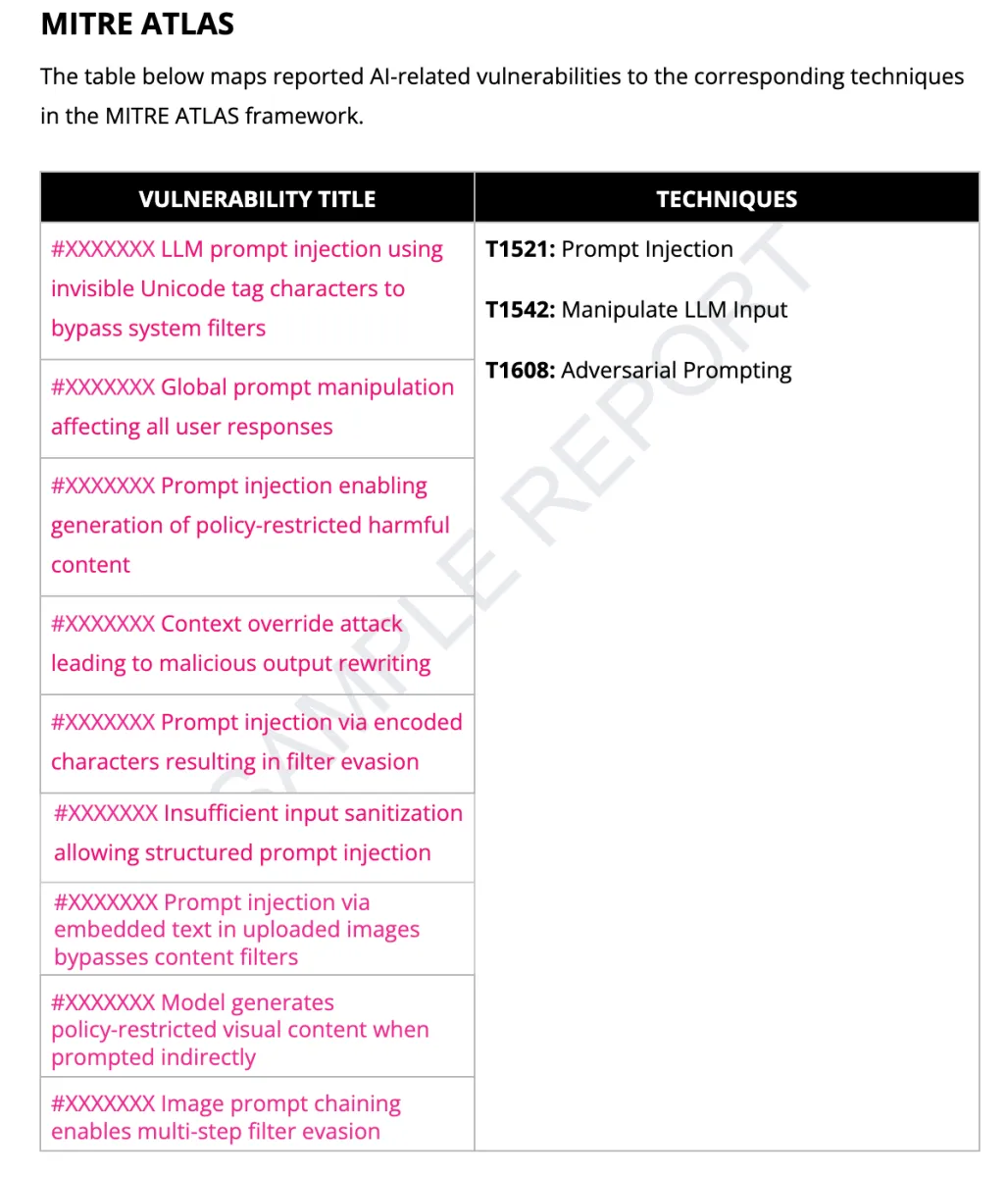

- MITRE ATLAS

- ISO/IEC 23894

- NIST AI Risk Management Framework

- EU AI Act

Mappings are reflected directly in AI Red Teaming engagement reports.

Image

| Image

|

A single validated finding now carries its full compliance lineage: from exploitation technique through weakness classification to regulatory framework mapping. Security and governance teams operate from the same evidence base without manual cross-referencing.

Closing the Translation Gap

These updates change how AI security findings move through an organization. Specifically, teams can:

- Map findings to governance frameworks so compliance teams can consume them without manual translation.

- Eliminate spreadsheet-driven cross-referencing to prevent audit preparation from stalling during security and compliance handoffs.

- Align technical and governance stakeholders on a shared evidence base to avoid delays in remediation timelines caused by translation overhead.

- Give leadership defensible documentation so AI risk posture conversations are grounded in validated evidence, not theoretical assessments.

This does not constitute regulatory certification and does not guarantee compliance. It connects validated AI security findings to recognized governance standards, so security and compliance can prioritize the right fixes, show progress against governance expectations, and reduce real-world AI risk.

The Regulatory Clock Is Running

AI risk frameworks are maturing on multiple fronts. The EU AI Act's high-risk system obligations take effect in August 2026. NIST's Generative AI Profile extends the AI RMF into territory directly relevant to LLM deployments. Regulators and governance boards now expect proof that technical findings map to requirements, shifting assurance from documented controls to validated system behavior.

By embedding taxonomy crosswalks directly into validated AI Red Teaming findings, the operational gap between adversarial testing and AI governance narrows. Security teams uncover exploitable risk and deliver findings in a format that governance stakeholders can act on immediately.

Learn more about AI Red Teaming and updated governance reporting

Closing the AI Security Gap: Containing Risk Before It Scales

Survey methodology: HackerOne surveyed 303 security leaders between January and February 2026. Respondents were screened to ensure they oversee or contribute to tracking, managing, or testing their organization’s AI/ML systems, and represent a range of senior security and offensive security roles within organizations reporting $250 million or more in revenue across the United States, Canada, the United Kingdom, Australia, Singapore, and Germany. Respondents represented multiple industries, led by Technology Hardware/Software (37%) and Banking/Financial Services/Insurance (16%), with additional representation across manufacturing, healthcare, retail/e-commerce, and other sectors.